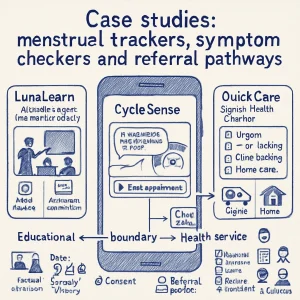

Case studies: menstrual trackers, symptom checkers and referral pathways

In this topic we’ll look at realistic examples that show how apps that look similar can end up on very different sides of the “educational AI” vs “health service” boundary. Small design or messaging changes can push an app across that line — and that shift matters a lot for safety, regulation, consent, data practices and the expectations of young people, families and schools.

I’ll give short case vignettes, call out what changed and why it matters, and then give practical checklists and templates you can use as an educator, designer or policymaker.

Quick framing: why the boundary matters

- Education apps: Aim to increase knowledge, awareness, social-emotional skills. Lower regulatory risk but still must protect privacy, be age-appropriate and avoid harmful misinformation.

- Health services: Aim to diagnose, triage, treat or advise on care. Higher regulatory scrutiny (medical device rules, clinical validation), professional liability and stronger safeguarding obligations.

- The same features (cycle charts, symptom forms, AI-generated text) can be harmless in one context and risky in another depending on claims, outputs and integration with health pathways.

Three short case studies

Case 1 — “LunaLearn”: Classroom menstrual literacy tool (clearly educational)

Scenario

- Used in secondary school health lessons.

- Students log cycle dates and mood as part of a classroom activity; data stored only on the student’s device or anonymized aggregate for class analytics.

- App provides short, age-appropriate explanations about menstrual physiology, anatomy, puberty and consent. No diagnostic language, no triage.

- Includes SEL content about managing mood swings, self-care tips and links to school counselor scheduling (optional).

Why this is educational

- Purpose: explicitly for learning and classroom exercises.

- Outputs: general information and supportive coping strategies, not diagnoses or risk scores.

- Integration: does not link to clinical services or EHRs.

- Data: minimal and local; optional anonymous class analytics with opt-in.

Design and policy implications

- Focus heavily on age-appropriate content and inclusivity (trans and non-binary students, cultural sensitivity).

- Clear consent for any sharing; allow anonymous use.

- Provide guidance for teachers on how to facilitate and when to escalate (e.g., if a student discloses self-harm risk).

- Label the app clearly: “Educational tool — not medical advice.”

Why it matters

- Students expect privacy and nonjudgmental learning.

- Educators need clear escalation protocols and training but don’t need the app to meet clinical evidence thresholds.

Case 2 — “CycleSense”: Consumer menstrual tracker with AI health suggestions (moves toward health service)

Scenario

- Users log period dates, symptoms, cycle length.

- The app’s AI analyzes patterns and generates statements like “You may have irregular cycles consistent with PCOS” or “Your symptoms are consistent with early pregnancy — consider a test and contacting a clinician.”

- The app suggests action: in-app “book an appointment” buttons that connect to telehealth clinics and gives a risk score (low/medium/high).

What changed from Case 1

- Diagnostic/clinical language and risk scoring.

- Direct linkage to clinical services and booking.

- The app makes actionable health recommendations.

Why this is now a health service

- Intended purpose expands to triage/clinical indication.

- Outputs could influence health decisions (testing, medication).

- Likely falls under medical device regulation in many jurisdictions; needs clinical validation, quality systems, possibly clinician oversight.

Design and policy implications

- Must clinically validate the AI’s predictions/triage logic.

- Implement robust data protection, given sensitive health data and third-party clinical integrations.

- Secure proper regulatory classification; may need to register as a medical device, follow post-market surveillance, adverse event reporting.

- Build clear, evidence-based referral pathways and ensure clinicians receiving referrals understand the app’s limitations.

Why it matters

- Misclassifying increases safety and legal risk.

- Young users may act on app recommendations without adult support; this raises consent and safeguarding issues — especially for minors.

Case 3 — “QuickCare Sexual Health Checker”: Symptom checker tied to clinic triage (clearly health service)

Scenario

- A youth-facing web/mobile symptom checker asks about symptoms of STI, abdominal pain, unusual bleeding.

- It uses AI + rule-based logic to triage: “Seek emergency care now,” “Book same-day clinic,” or “Home care and watchful waiting.”

- When users select “Book,” app sends structured referral packets to the partner clinic, including user-entered history. Option for a warm handoff phone call from a clinician.

- Integrated mandatory reporting triggers if self-harm or abuse disclosures are made.

Why this is a health service

- Triage decisions and urgent care advice have direct clinical consequences.

- Integrated with clinics and clinical workflows.

- Contains reporting/escalation mechanisms and clinical documentation.

Design and policy implications

- Very strong clinical governance required: clinicians must validate triage algorithms, oversight of escalation criteria, audit logs.

- Safeguarding workflows must meet local laws (child protection, mandatory reporting).

- Interoperability and secure data transfer with EHRs; policies on who can access data.

- Informed consent processes must explain purpose, data sharing with clinics, and limits to confidentiality (e.g., reporting abuse).

Why it matters

- The app has greater potential to help but also to harm if triage is incorrect, data leaks happen, or safeguarding is mishandled.

What small changes push an app across the boundary?

Common “push” factors

- Using diagnostic language (“you have X,” “likely PCOS,” “probable STI”).

- Providing risk scores, urgency levels, or actionable clinical advice.

- Connecting to booking systems, EHRs, or clinician workflows.

- Explicitly marketed for diagnosis, monitoring of a medical condition, or guiding treatment.

- Claiming clinical accuracy, algorithms trained on clinical datasets.

- Collecting and processing sensitive health-identifiable data long-term or centrally.

If any of these appear, reassess the app as a potential health service and follow stricter governance and regulatory pathways.

Practical checklist: Is this educational or a health service?

Answer these questions. If you answer “yes” to any, you likely need to treat the product as a health service.

-

Intended purpose

- Is the app meant to inform/teach only? (educational)

- Or is it meant to diagnose, triage, recommend medical tests/treatment? (health)

-

Claims and outputs

- Does the app use clinical/diagnostic language, risk scoring, or urgency labels?

- Does it tell users what medical steps to take?

-

Integration

- Does it send data to clinics, book appointments, or integrate with EHRs?

-

Audience and context

- Is it targeted at minors or vulnerable populations?

- Is it used in school settings where staff will act on outputs?

-

Data and model

- Does it process or store sensitive health data centrally?

- Is an AI model making predictive clinical judgments?

-

Liability and regulation

- Is the vendor making claims that would attract regulatory review (FDA, EU MDR, MHRA)?

- Is there clinician oversight?

If you’re unsure, default to treating it like a health service until legal and clinical review clears it.

Referral pathways: what they need to include (practical steps)

A safe referral pathway isn’t just a “book now” button. It should include:

-

Triggering rules

- Exact criteria that create a referral (e.g., risk score threshold, symptom combinations, self-harm disclosure).

- Who sets and reviews those criteria (clinical team).

-

Consent and transparency

- Clear explanation at point of use: what will be shared, with whom, why, and what confidentiality limits apply.

- For minors, explain parental involvement rules and local legal exceptions.

-

Data packet structure

- Minimum data needed for triage (symptoms, relevant history), not full transcript.

- Use structured fields to support clinical review, with timestamps and source markers.

-

Warm handoff options

- Immediate phone callback from a clinician for high-risk cases.

- Secure messaging or scheduled telehealth appointments for non-urgent referrals.

-

Documentation and audit

- Log all referrals, who accessed data, timestamps, and clinician responses.

- Mechanism to follow up on whether referral resulted in care.

-

Safeguarding and legal compliance

- Automated triggers for mandatory reporting where required.

- Protocols for consent overrides in emergencies.

-

Escalation pathway map

- Clear flowchart showing user path: self-care advice → clinic booking → urgent referral → emergency services.

- Roles and contacts (school counselor, local clinic, crisis lines).

Sample referral flow (simple)

- User completes symptom check → system finds “high-risk” red flag → show urgent message “seek emergency care” + call 911/local emergency number + offer to notify school nurse/guardian per policy → if user confirms, system initiates warm handoff to clinician and logs action.

Wording snippets you can reuse (adapt to local law)

- Educational labeling: “This tool is for educational purposes only. It provides general information to support learning about bodies and health. It is not medical advice.”

- Consent for referral: “By tapping ‘Send to Clinic’ you consent to share the information you provided with [Clinic Name] to arrange care. If you are under [local age], note that [policy about parental notification] applies.”

- Triage alert (high risk): “Your answers suggest you may need urgent medical attention. Please call emergency services now or we can connect you to a clinician for immediate support.”

Note: disclaimers do not replace clinical governance or regulatory requirements.

Data and privacy practices to apply in both educational and clinical cases

- Minimize data collection — collect only what’s necessary.

- Default to local storage for sensitive inputs in educational contexts; if cloud storage is necessary, encrypt in transit and at rest.

- Retention policy — clear time limits; delete on user request.

- Pseudonymize when integrating with clinical workflows; use secure, consented identifiers.

- Role-based access control — only authorized staff/clinicians can see identifiable data.

- Explain sharing, third-party processing and cross-border transfers in plain language.

Inclusion, bias and youth-centered design

- Co-design with young people, including trans and non-binary users and those with disabilities.

- Test models on diverse datasets; clinical validation must include age, race, body types, hormonal diversity.

- Avoid gendered assumptions: allow users to self-describe bodies, identities and pronouns.

- Design for different literacy levels; use visuals, audio and multiple languages.

Evaluation & monitoring (what to measure)

- Clinical accuracy (if health service): sensitivity, specificity of triage/predictions validated against clinical outcomes.

- Safety incidents: wrong advice, missed red flags, adverse events.

- Usability and acceptability with youth and educators.

- False positive/negative rates and bias analysis across demographic groups.

- Referral completeness: percent of referrals that led to appropriate clinical follow-up.

Practical recommendations for educators, designers and policymakers

For educators

- Only deploy apps that are clearly labeled and whose scope is appropriate to a school setting.

- Ensure school policies define when staff should act on app outputs and how to protect student privacy.

- Train staff on referral pathways, confidentiality limits and mandatory reporting.

For designers

- Define product scope early — educational or clinical. Don’t blur both without committing to clinical governance.

- If adding any triage or diagnostic features: involve clinicians, legal/regulatory experts and run clinical trials.

- Build clear consent, opt-in sharing, and age-aware interfaces.

For policymakers / procurement leads

- Require vendors to declare clinical claims, regulatory status, data practices and evidence of co-design with youth.

- Ask for a safety and escalation plan, including who will be contacted in emergencies.

- Institute minimum contract clauses for safeguarding, audit rights and data deletion.

Quick decision rubric (one-page cheat)

- Are you making health claims or giving medical advice? — Yes → treat as health service.

- Do you connect to clinics/EHRs or allow booking/treatment? — Yes → health service.

- Is the app used in classrooms only for learning, with local storage and no clinical output? — Yes → educational tool.

- If “maybe” or “unsure” anywhere — pause development, consult clinicians and legal/regulatory advisors.

Final takeaway (in plain language)

Two apps that look the same can be legally, ethically and operationally very different depending on what they claim, what outputs they generate, and whether they link into care. When you design or choose tools for young people, be explicit about purpose, build safe referral pathways, protect privacy, and involve clinicians and young people early. It’s much easier — and safer — to design with those boundaries in mind from the start than to retrofit them later.

If you want, I can:

- Draft a sample school policy clause for using an app like this.

- Create a template clinical escalation flowchart you can adapt.

- Produce user-facing consent language for minors in plain language. Which would be most useful?