Welcome! In this lesson we’ll tackle a tricky but vital question: when does an AI tool used in schools stay an educational resource, and when does it cross into being a health service or medical device — with all the legal, ethical and safety implications that follow, especially for minors.

This is a practical, hands-on lesson aimed at educators, curriculum designers, edtech teams and policy makers who need to make safe, accountable choices about AI tools that touch students’ health, sexuality education and well‑being.

What you’ll get from this lesson

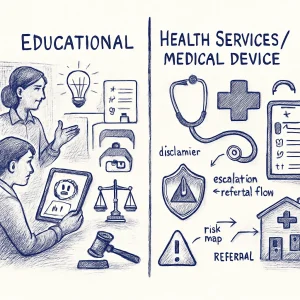

- Clear ways to tell educational tools apart from health services or regulated medical devices.

- A sense of the key regulatory and classification issues that apply to products used by minors.

- Concrete case studies (menstrual trackers, symptom checkers, referral pathways) to see risks and responses in context.

- Design and legal strategies you can use right away: scoping, disclaimers, escalation/referral flows and partnership models with health providers.

- A practical activity where you’ll map regulatory risk for a sample app so the concepts stick.

Learning objectives

By the end of this lesson you will be able to:

- Distinguish when an AI tool is educational vs. when it likely qualifies as a health service or medical device.

- Identify major regulatory considerations for products aimed at children and adolescents.

- Evaluate real-world examples and spot where risk, duty of care and regulation matter.

- Draft basic design and legal controls (scope limits, user-facing disclaimers, escalation pathways).

- Complete a simple regulatory risk map for an app and recommend mitigations or next steps.

Lesson overview (what we’ll cover)

- Distinguishing educational tools from health services/medical devices — Practical criteria and red flags.

- Regulatory and classification issues (especially for minors) — Who regulates what, and why it matters for schools and vendors.

- Case studies — Menstrual trackers, symptom checkers and how referral pathways can (and should) work.

- Design and legal strategies — Scoping, clear disclaimers, escalation rules, data minimization and partnership approaches.

- Activity: regulatory risk mapping for a sample app — Hands-on group or individual mapping exercise with a template.

How this lesson is delivered

- Format: short readings, guided case studies, discussion prompts, and a hands-on activity (risk map).

- Time: ~75–120 minutes (self-paced; depends on depth of activity discussion).

- Materials: lesson slides/readings, sample app spec, regulatory risk map template (provided in the lesson resources).

- Suggested group work: small breakout discussions to compare classifications and mitigation choices.

Prerequisites and helpful background

- Basic familiarity with AI in education and common edtech features.

- Some awareness of privacy and data protection basics (COPPA, GDPR, or local equivalents helpful but not required).

- Curiosity about ethical and legal tradeoffs — you don’t need to be an attorney to do the exercises; the goal is practical, not legal advice.

Ethical and safety notes

- This lesson is about making safer design and policy choices — it is not legal advice. Local laws and medical regulations vary.

- When in doubt, escalate to qualified legal counsel, child protection leads and licensed health professionals.

- Prioritize privacy, informed consent (age-appropriate), mandatory reporting obligations and minimizing harm when designing or approving tools.

How to get the most from this lesson

- Bring examples from your context (an app you’re considering, a tool in your classroom) and use the risk map to evaluate it.

- Be ready to question assumptions — what seems “just educational” can have real health consequences for young people.

- Use the case studies as templates for conversations with vendors, school leaders and health partners.

Ready? Let’s draw the lines thoughtfully — so the tools we use to support learners don’t unintentionally become sources of harm or liability. Next up: distinguishing educational tools from health services.