AI‑enabled prevention and support: detection, moderation and ethical monitoring

Quick welcome: this topic explains when and how AI can responsibly help identify risks and support learners — without overstepping privacy, consent, or trust. It mixes principles, practical steps, and short activities you can use right away with teams who build classroom tools, learning platforms, or school policies.

Learning goals

- Understand the kinds of risk signals AI can surface (and its limits).

- Know the ethical guardrails for detection, moderation and monitoring.

- Be able to design a human-centered workflow: detection → triage → support → documentation.

- Apply concrete checklists, consent language, and escalation templates for classroom or product use.

Why this matters (short)

AI can spot patterns humans miss — bullying trends, sudden drops in engagement, language suggesting self‑harm, grooming signals. That makes it powerful for prevention and early support. But it can also misread context, harm privacy, and erode trust if used without consent and clear boundaries. The goal: use AI to help people, not surveil them.

Core principles (always keep these in mind)

- Purpose limitation: only collect and analyze data for clearly stated, learner‑benefit goals.

- Minimization: gather the least data needed; avoid continuous full surveillance when targeted checkpoints suffice.

- Transparency & consent: learners and caregivers should know what is monitored, why, and how alerts will be handled.

- Human‑in‑the‑loop: AI flags — humans decide. Never let automated systems make final safeguarding decisions.

- Equity & bias awareness: test systems across languages, cultures, and neurodiversity; watch for disparate false positives/negatives.

- Privacy & security: encrypt sensitive data, restrict access, and define short retention periods.

- Proportionality and least intrusion: escalate with the least invasive, most supportive steps first.

Types of detection and what they can (and can’t) do

- Text analysis (chat, essays, forum posts)

- Can flag keywords, sentiment shifts, patterns like repeated self‑harm language, or grooming phrases.

- Limits: sarcasm, slang, context, local idioms; high false positive risk.

- Behavior analytics (logins, drop in participation, abrupt grade changes)

- Can surface disengagement or sudden changes that often accompany mental health issues.

- Limits: non‑AI causes (family, connectivity, boredom) — needs human follow‑up.

- Image/audio analysis (photos shared, voice patterns)

- Can detect explicit content, some emotion cues.

- Limits: high privacy risk and strong potential for misinterpretation; stricter scrutiny required before use.

- Multimodal signals (combine the above)

- Stronger signal when thoughtfully combined (e.g., text + drop in attendance).

- Limits: adds complexity and aggregate privacy concerns.

Responsible detection: design checklist

- Define the protective purpose (what harm are you trying to prevent?)

- Map exactly which data sources are required — and why.

- Use data minimization: prefer derived features (e.g., “sentiment score”) over raw content storage when possible.

- Build conservative thresholds to reduce false positives, and test threshold behavior.

- Require human review before any outreach or disciplinary action.

- Document the detection logic, validation results and known failure modes.

- Provide clear opt‑out or alternative participation pathways where feasible.

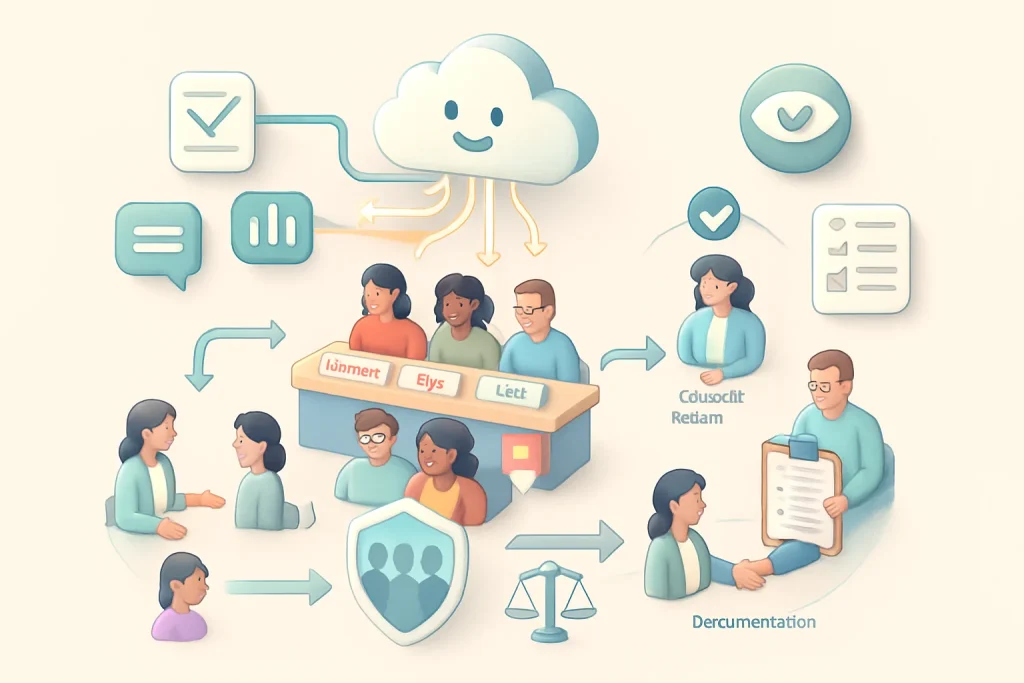

A practical, human-centered workflow (recommended)

- Signal detection (AI flags possible concern)

- Keep alerts limited to a short, curated set of staff roles who are trained.

- Triage (human reviewer assesses context and urgency)

- Use a standard rubric: Immediate danger / High concern / Low concern / False positive.

- Action (supportive, proportional interventions)

- Immediate danger → emergency protocols (call guardians + emergency services if required).

- High concern → private check‑in with learner + referral to counselor.

- Low concern → resource nudges, monitor trend.

- Documentation & follow‑up

- Log actions, outcomes, and whether the AI flag was valid. Use this to retrain models and adjust thresholds.

- Feedback & appeal

- Allow learners (and guardians where appropriate) to ask about or appeal actions taken.

Escalation templates and scripts (examples you can adapt)

- Initial supportive check‑in (for school staff)

- “I noticed you haven’t been participating recently and I wanted to check in. Is everything okay? Is there anything I can support you with?”

- High‑concern outreach (private, non‑alarmist)

- “I read something that made me a bit worried about your safety. I care about you and want to make sure you’re okay. Can we talk now or is there a better time?”

- When emergency services are needed

- Follow your local mandated reporting rules; keep communication factual and focused on learner safety.

Consent, notice and transparency (practical language)

- For learners (age‑appropriate)

- “This platform checks messages for words or patterns that might mean someone is hurting. If the system is worried, a trained staff member will check and talk with you or your caregiver to make sure you’re safe. You can ask us how it works and what it saw.”

- For caregivers/guardians

- “We use an automated system that highlights certain messages or behavior changes so school staff can quickly support students who might be at risk. The system does not make decisions — a staff member reviews alerts before any action. Data is stored for X days and only accessible to a small, trained team.”

- Consent checklist

- Who is monitored

- What data is analyzed and why

- Who sees alerts and how they are handled

- Retention times, third‑party sharing, opt‑out options

- Contact for questions/appeals

Human‑in‑the‑loop rules (must-haves)

- No automated punitive action (suspension, grade change) without human review.

- Triage reviewers must be trained (cultural competence, trauma‑informed responses, child protection laws).

- Maintain clear role boundaries: technical staff tune models but do not handle sensitive outreach.

- Log reviewer decisions for auditing and continuous improvement.

Mitigating bias and harm

- Validate models on representative samples: languages, ages, genders, neurodiversity, socioeconomics.

- Track false positive/negative rates across groups and aim for parity.

- Avoid proxies that encode sensitive attributes (e.g., using ZIP code as a risk score).

- Build in “explainability” — simple reasons why a flag occurred (e.g., “flagged because of repeated self‑harm phrases in two messages”).

- Regularly consult with learners and community stakeholders on thresholds, tolerances and harms.

Privacy, security and data lifecycle

- Prefer ephemeral signals over long‑term content storage. If raw content must be stored, justify it.

- Use encryption at rest and in transit; limit access with role‑based permissions.

- Define and publish retention schedules (e.g., alert details: 90 days; raw messages: 14 days unless required).

- Avoid sharing with third parties unless necessary and covered by data agreements.

- Include data deletion/removal processes for appeals or errors.

Legal and policy checks (high level)

- Know applicable local/national laws: data protection (GDPR), children’s online protections (COPPA in U.S.), education records (FERPA in U.S.), mandatory reporting laws.

- Build processes that meet or exceed legal floor, document compliance and retain records for audits.

- Include policy for law enforcement requests: require legal review and minimal disclosure.

Testing, evaluation and continuous improvement

- Run red‑teaming and safety testing: simulate edge cases, slang, non‑literal language.

- Measure precision, recall, and — crucially — downstream outcomes (did flagged learners get effective support?).

- Keep a feedback loop from human reviewers to modelers: false positives should be used to refine patterns, not to silence detection.

- Publish an annual transparency report for stakeholders summarizing alerts, outcomes, and improvements (anonymized).

Sample risk assessment activity (15–30 minutes)

- Pick an AI use case in your context (chat moderation, forum monitoring, attendance anomaly detection).

- For that case, answer:

- What harms are we trying to prevent?

- What data do we absolutely need?

- Who will see alerts and what is their training level?

- What are the top 3 failure scenarios (false positive, false negative, malicious manipulation)?

- What’s our minimum viable escalation path (e.g., private teacher check, counselor referral, emergency)?

- Share decisions with a peer group and revise based on feedback.

Short roleplay (30 minutes)

- Roles: learner, teacher/reviewer, counselor, product manager.

- Scenario: system flags a chat containing ambiguous language (“I can’t do this anymore”).

- Play the steps: reviewer checks context, decides triage level, contacts learner with a supportive script, documents the interaction, reports outcome.

- Debrief: what went well? Where were assumptions? How did consent/notice play out?

Template: Basic monitoring policy (editable)

- Purpose: limited to learner safety and well‑being.

- Scope: which services and learner groups are included.

- Data types: list (chat text, forum posts, participation logs — no voice/images unless explicit consent).

- Access & roles: who receives alerts; who authorizes outreach.

- Response times: emergency (immediate), high (24 hours), low (1 week).

- Retention: alert metadata 90 days; raw content 14 days.

- Training: annual trauma‑informed response and privacy training for all reviewers.

- Audit: quarterly review of flags, accuracy and outcomes with stakeholder input.

When AI should NOT be used (red flags)

- Continuous audio monitoring of classrooms without explicit, high‑justified consent.

- Secret monitoring of private messages without guardian/learner notice under ordinary circumstances.

- Automated punitive decisions or scoring that could affect a learner’s status without human review.

- Using invasive biometric analysis (emotion recognition from faces) for safety without deep ethical review and policy clearance.

Simple monitoring decision flow (text form)

- AI flag → Human reviewer?

- No → system logs and continues monitoring.

- Yes → reviewer assesses context:

- Immediate safety risk → follow emergency protocol (notify guardians + services).

- Non‑immediate concern → confidential check‑in with learner; offer resources; document.

- False positive → log and use for model tuning; do not change learner status.

- Record outcome and time to resolution.

What to tell learners and families (example FAQ bullet points)

- “What is being monitored?” Short list.

- “Why?” To keep learners safe and connect them to support quickly.

- “Who sees alerts?” Only a small, trained team; no automated punishments.

- “Can I opt out?” Explain options and any tradeoffs (e.g., some features require monitoring to function).

- “How long is my data kept?” Be specific.

- “How do I question or appeal an action?” Provide contact point and timeline.

Further reading and resources

- Look for up‑to‑date guidance from child protection NGOs, education ministries, and data protection authorities in your country.

- Consult trauma‑informed care resources before designing outreach scripts.

- Engage learners and families in co‑design sessions — using their feedback improves accuracy and trust.

Closing thought

AI can be a helpful early‑warning system — but only when it’s used as a tool for humans who are trained, compassionate and guided by clear policies. Prioritize consent, minimum intrusion, transparency and consistent human judgment. If you implement a system, test it, publish what you learn, and keep refining with the people it affects.

If you want, I can:

- Draft a consent notice tailored for your platform and learners’ ages.

- Create a short training slide deck for reviewers (10 slides).

- Build a sample triage rubric you can copy into your SOP. Which would be most helpful?